Neural Networks and Deep Learning: The Architecture of Learning

💡 Quick Tip

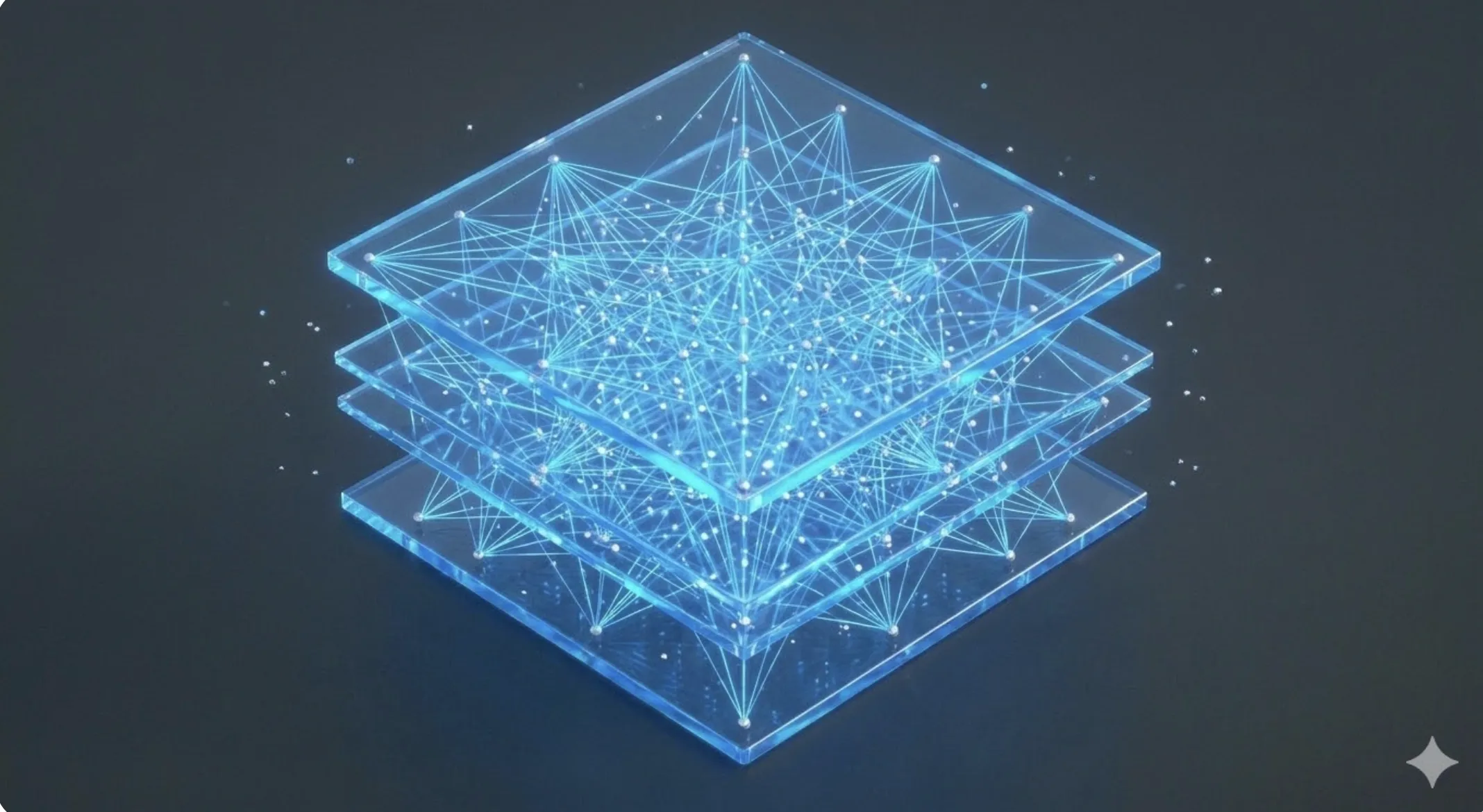

Pro Tip: Deep Learning is an evolution of neural networks that uses multiple hidden layers to extract complex features.

When NASA had to improvise a CO2 filter for Apollo 13, it did so by understanding the basic principles of air flow and pressure. That is real engineering. In contrast, much of the "intelligence" we consume today is treated as an expensive remote control: we press a button and hope for a miracle, without understanding that neural networks are, in essence, mathematical bit structures trying to represent complex atoms.

The great current problem is that we build networks that act as data islands within companies. They are not integrated into the real flow. To solve this, we return to the Digital Twin analogy. As Cinto Casals, AI Engineer, describes, a deep neural network is nothing more than the inference engine of a Digital Twin, in charge of predicting future states based on previous data architecture.

We implement "Step Zero" as a methodological differentiator. We don't start by buying massive GPUs (atoms); we start by designing how training bits will be organized. The vision is invisible technology, where Deep Learning merges with the environment, optimizing logistics or energy consumption autonomously without the user having to interact with an interface. AI becomes as natural as electricity.

If your AI strategy is based only on buying computing power without a clear information architecture, are you doing engineering or are you just feeding a consumption machine you don't understand?

📊 Practical Example

Real-World Scenario: Training a Component Quality Classifier

Step 1: Dataset Preparation. Collect 10,000 test data points. Normalize input values to be between 0 and 1, as neural networks converge much faster with scaled data.

Step 2: Topology Definition. Create a network with an input layer of 5 neurons (sensors), two hidden layers of 64 neurons each with ReLU activation, and an output layer with one neuron and a Sigmoid function.

Step 3: Training. Use the 'Binary Crossentropy' loss function and the 'Adam' optimizer. Monitor the error decrease over 50 epochs.

Step 4: Validation. Test the network with unseen data. If accuracy exceeds 98%, deploy the model to the production line microcontroller.