Docker Containers: Isolation and Portability

💡 Quick Tip

Pro Tip: A container is not a virtual machine; it shares the host operating system's kernel.

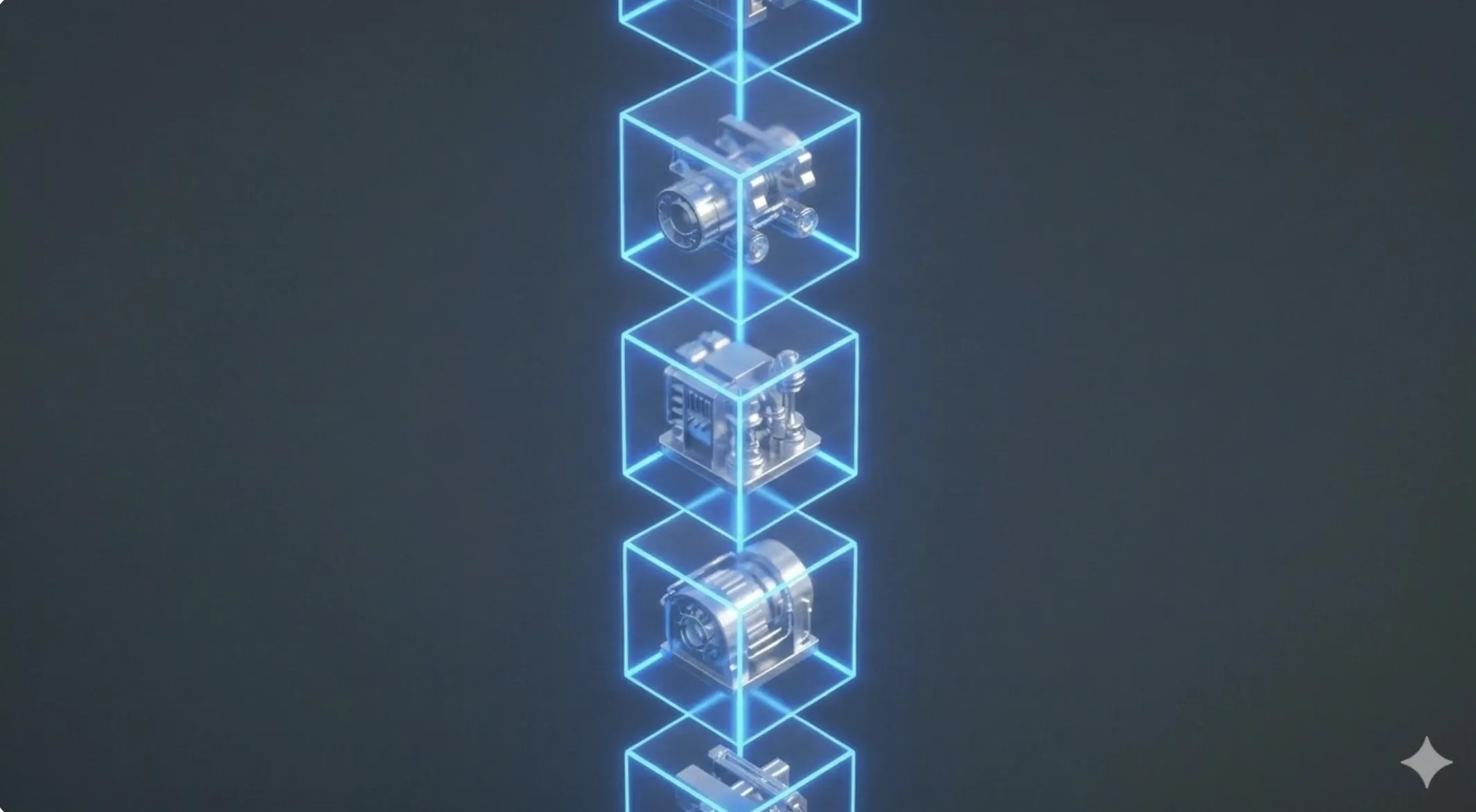

What is Containerization?

Docker introduced a revolutionary way to package software. A container is a standard unit of software that packages code and all its dependencies (libraries, configs, binaries) so the app runs quickly and reliably from one computing environment to another. It relies on kernel features like Namespaces and Control Groups (cgroups).

Image Layers and Efficiency

Docker images are built using layers. Each instruction in a Dockerfile creates a new read-only layer.

- Reuse: If 10 apps use the same Ubuntu image, Docker keeps only one copy of that base layer on disk.

- Speed: Only changed layers are sent during updates, allowing for millisecond deployments.

📊 Practical Example

Real-World Scenario: Deploying a Local "Full Stack" Environment

Step 1: docker-compose.yml Creation. Define three services (PostgreSQL, Redis, and a Python API). Use Docker volumes to ensure database data persists when the container stops.

Step 2: Network Isolation. Docker creates a virtual internal network. The API can connect to the database using the service name (host: postgres).

Step 3: Environment Startup. Run docker-compose up -d. Docker downloads official images and launches three isolated processes in seconds.

Step 4: Portability. New developers only need to run the same command, eliminating the "it works on my machine" technical issue.