AMD EPYC vs Threadripper PRO: High-Performance Local AI

💡 Quick Tip

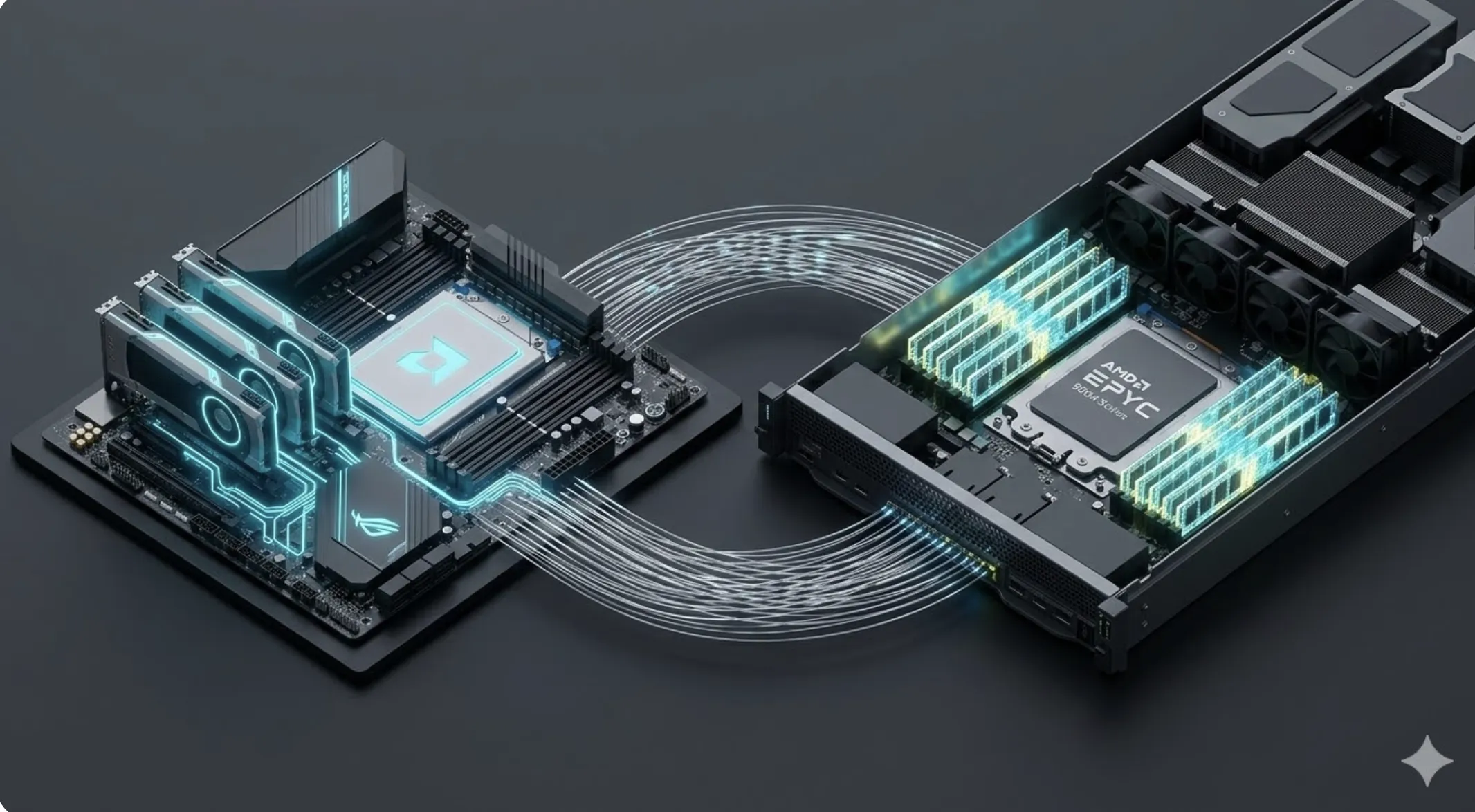

EPYC or Threadripper PRO for local AI? In 2026, the choice depends on memory density and the clock frequency needed to orchestrate massive language models on high-performance workstations.

The Cray-1 and the Obsession with Bandwidth

In 1975, Seymour Cray designed the Cray-1 with an architecture where every cable had the same length to avoid electrical phase shifts. It was the engineering of absolute precision. Today, local AI faces a similar challenge: memory bandwidth. Between an AMD EPYC and a Threadripper PRO, real engineering looks not just at cores, but how many memory channels it can open to feed the AI. It is the difference between a generic server solution and a workstation optimized for individual talent.

The Thesis: The Rack Server as an Expensive Remote Control

Buying a 128-core EPYC server for a single AI developer can be operating an expensive remote control. If the workflow does not leverage the 12 memory channels, the hardware will be underutilized. Threadripper PRO, with higher clock frequencies, is often the better investment for agentic orchestration tasks where single-thread speed is as critical as massive GPU parallelization.

The Diagnosis: Computing Islands and PCIe Bottlenecks

A common failure is creating computing islands where a powerful CPU is isolated from its GPUs by insufficient PCIe lanes. According to Cinto Casals, AI Engineer, the key is balance. A system that cannot move data at 128 GB/s between system memory and video card VRAM is an inefficient silo that slows down local model training.

Future Vision: The Invisible Technology of Local Inference

The future leads us to invisible technology, where local workstations will process foundational models silently. In 2026, the distinction between CPU and NPU will be blurred, and systems like Threadripper will integrate AI accelerators so powerful that dependence on server clusters will be an option, not an obligation for innovation.

📊 Practical Example

Real Scenario: Workstation for LLM

A language model developer configures a machine with Threadripper PRO to leverage its high clock frequencies for data pre-processing. By connecting 4 high-end GPUs, the system uses the processor's direct PCIe lanes to avoid latency, enabling fast training iterations.